You can have the greatest and most helpful content in the world, but if your technical SEO is off, it can cause all your hard work to be for nothing.

For instance, if you aren’t aware that your server is blocking crawler bots from accessing your content – you won’t appear in the SERPs at all.

That’s why it’s crucial to conduct regular technical SEO checklist audits to ensure everything is running smoothly behind the scenes.

How often you conduct audits will depend on a few factors, including the age of your website. If you’re running a brand-new site, running SEO audits every few weeks is a good idea to prevent any issues from affecting your progress.

For older websites, you should still run a mini-audit each month and an in-depth audit each quarter to keep any potential indexing errors or page speed issues at bay.

If that sounds excessive, it’s better to be safe instead of sorry.

After all, 55.6% of SEOs agree that too little importance is placed on technical SEO, primarily because of how much of an impact it can have on SERP rankings.

That means technical SEO audits are well worth your time, even if you don’t uncover any issues.

The only problem is that technical SEO is so comprehensive that it’s easy to forget things during the audit.

To remedy this, read on to check out our in-depth technical SEO checklist that’ll ensure you cover all the bases.

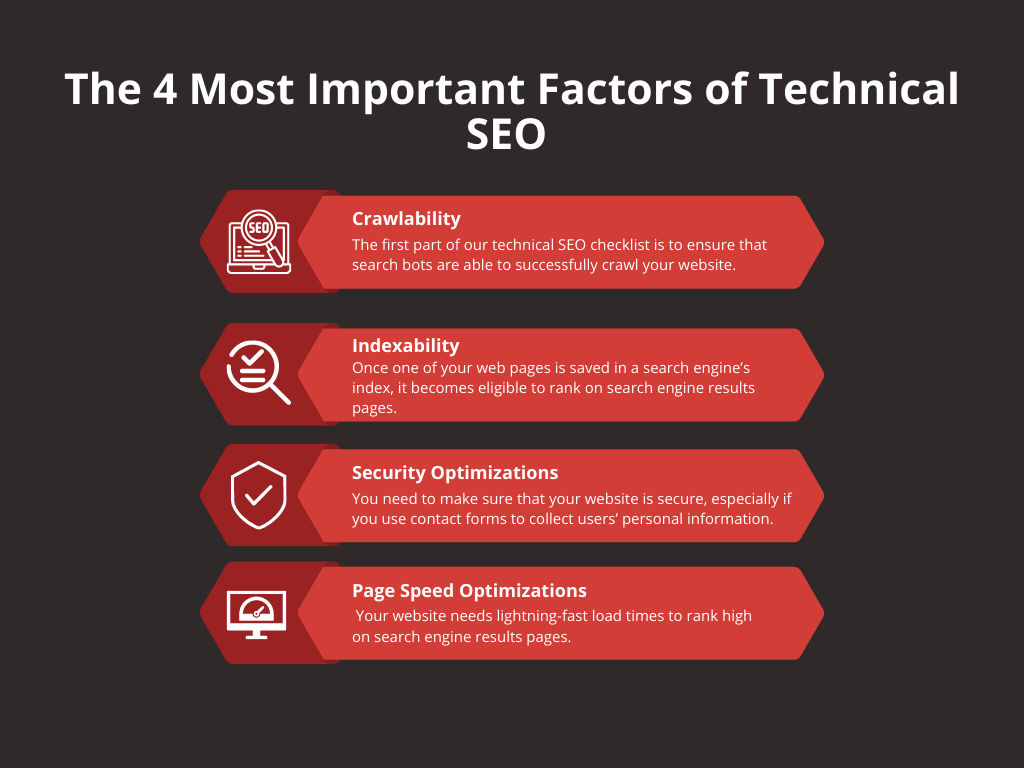

The 4 Most Important Factors of Technical SEO

To make the checklist easier to digest, we’ve split it into four main sections that represent the most important aspects of technical SEO.

They are:

- Crawlability

- Indexability

- Security

- Page Speed

Each of these factors encompasses countless technical tweaks, so let’s take a closer look at each one.

#1: Crawlability Optimizations

The first part of our technical SEO checklist is to ensure that search bots are able to successfully crawl your website.

Why is that?

It’s because these search bots need to crawl your website to gather more information about it, such as your site architecture and the keywords placed within your content.

Not only that, but the crawling process is when search engines decide what to save in their index – which is what will appear in search engine results.

So if a search engine’s bots can’t crawl your most important pages, they won’t appear in the SERPs.

Here’s a look at the optimizations you’ll need to make to improve your website’s crawlability.

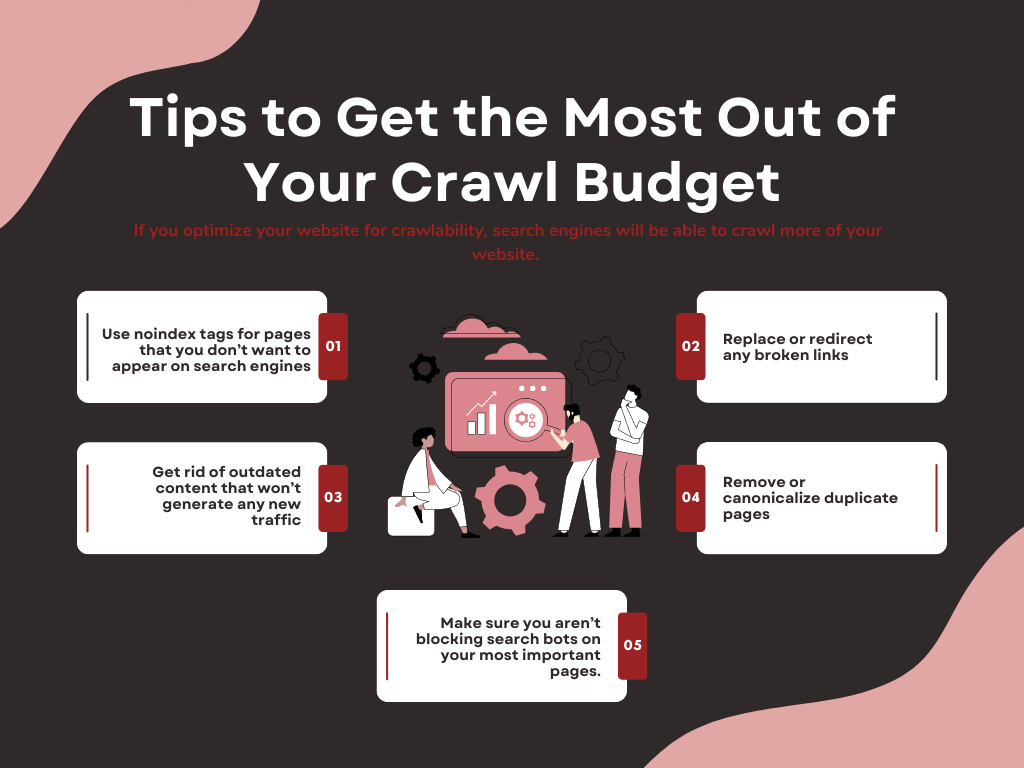

Be aware of your crawl budget

It’s crucial to know that search bots won’t index every single page on your website, as that would risk overwhelming your servers.

Search bots use ‘crawl budgets’ to preserve bandwidth, which refers to the maximum number of web pages a search bot can crawl on a website without putting too much strain on the servers.

What determines your crawl budget?

There are a few factors, one of which is crawl health.

Basically, if your site responds quickly to the crawling process, your crawl budget goes up.

So if you optimize your website for crawlability, search engines will be able to crawl more of your website. However, if certain factors slow down the crawling process, your crawl budget will decrease.

Besides not wanting to overwhelm web servers, Google also has restrictions on how many web pages it can crawl due to technical limitations.

There’s also crawl demand, which creates bigger crawl budgets for sites that are extremely popular to keep their content fresh in Google’s index.

Here are some tips to get the most out of your crawl budget:

- Use noindex tags for pages that you don’t want to appear on search engines (i.e., login pages, admin pages, thank you pages, etc.).

- Get rid of outdated content that won’t generate any new traffic.

- Make sure you aren’t blocking search bots on your most important pages.

- Replace or redirect any broken links.

- Remove or canonicalize duplicate pages (i.e., multiple colors/sizes of the same product)

Create and upload an XML sitemap

An XML sitemap makes it easier for search bots to crawl and index all your web pages, as well as make sense of your site architecture.

That’s especially true if you have a larger website containing millions of URLs.

Even if you have a smaller website, it can be beneficial to create and upload a sitemap to Google Search Console or Bing Webmaster Tools, respectively.

How can you create a sitemap?

The quickest and easiest way is to use a website crawler like Screaming Frog.

Once the tool completes an initial crawl of your website, you’ll be able to generate an XML sitemap automatically.

Before you export the sitemap, don’t forget to check the configuration settings. You’ll be able to customize your sitemap here, including the ability to include/exclude pages by response codes, images, last modified, etc.

As long as each URL has a singular version and a 200 status (2xx), you should be good to go.

Optimize your site architecture

Next, you need to organize your web pages to make them easy for search bots to find, crawl, and index.

If your website doesn’t feature a logical architecture, lots of web pages can get lost in the fray – leading to orphan pages and indexing errors.

A page is considered orphaned when it doesn’t have any internal links pointing to it. That makes it near impossible for search engines or users to find, which is bad news if the page contains valuable content that you want to rank.

The guiding principles of sound site architecture are A) to group related pages together and B) to use a flat architecture.

By flat, we mean that each page should only be a few clicks away from the homepage (typically no more than three or four).

That’s because the distance to your homepage matters in terms of SEO. The closer a piece of content is to your homepage (and the more high-quality inbound and outbound links point to it), the more clout it will receive on search engines.

A typical site architecture looks something like this:

- Level 1: Homepage

- Level 2: About Us, Services, Resources, Products, etc.

- Level 3: Service Pages, Blog Posts, Product Pages, etc.

Choose a URL structure

Also, your URLs need to follow a logical structure in the same way. Since the website is yours, it’s entirely up to you to determine the type of URL structure you’ll use.

The only rule is to stick to the structure you choose across your entire website.

You’ll get to choose from subdirectories or subdomains; just remember to stay consistent after you select one.

If you’re conducting an SEO campaign on Bing, then you’ll want to add your target keywords to your URLs – as that’s a significant ranking factor. You can do the same for Google, although it won’t have as much of an impact on your search performance.

It’s also a good idea to keep your URLs short and sweet, and you should always use lowercase characters. For URLs that contain more than one word, use dashes to separate them (i.e., www.yourblog.com/blog/how-to-train-your-dog)

#2: Indexability Optimizations

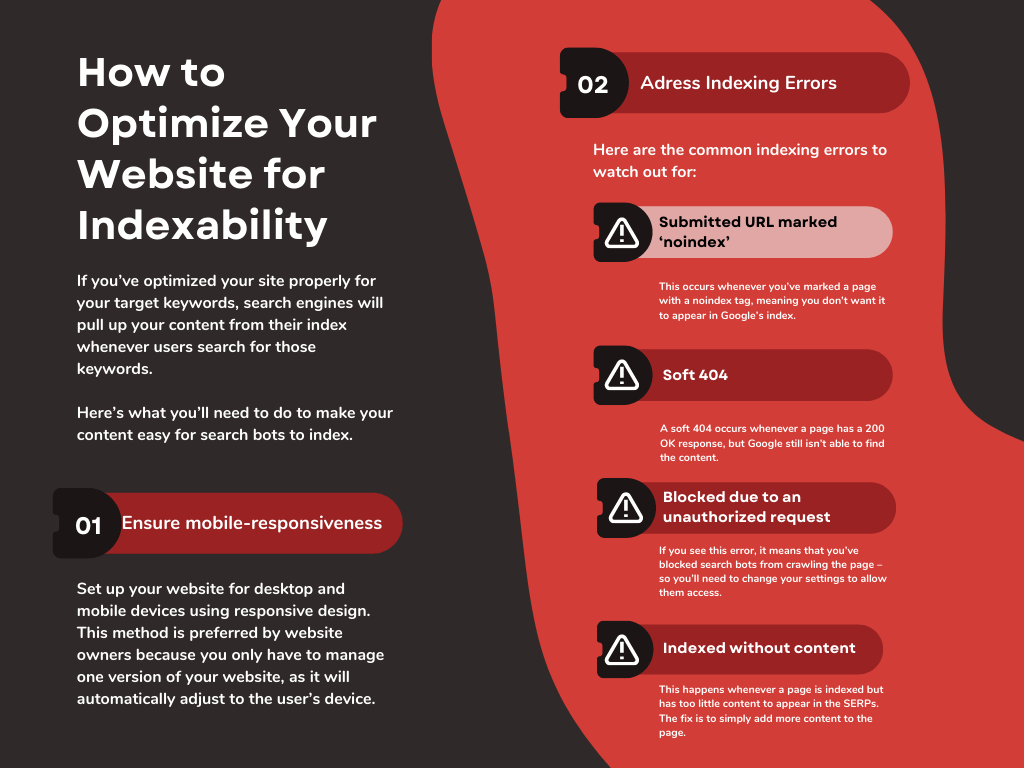

There are a separate number of optimizations you need to make to ensure search bots can properly index your most important web pages.

Once one of your web pages is saved in a search engine’s index, it becomes eligible to rank on search engine results pages.

If you’ve optimized your site properly for your target keywords, search engines will pull up your content from their index whenever users search for those keywords.

However, if your content isn’t in a search engine’s index, it’ll never appear in user searches, even if it’s perfectly optimized for the keywords they use.

Here’s what you’ll need to do to make your content easy for search bots to index.

Ensure mobile-responsiveness

Google has used mobile-first indexing for a while now, meaning their search bots will index the mobile version of websites first.

Why did they make the change?

It’s because mobile devices dominate the search engine market and have for some time. Mobile devices account for 56.9% of all search traffic, outdoing desktop searches and other alternatives combined.

The best way to set up your website for desktop and mobile devices is to use a responsive design. This method is preferred by website owners because you only have to manage one version of your website, as it will automatically adjust to the user’s device.

If you aren’t sure if your website is mobile-friendly, you can use Google’s Mobile-Friendly Test to find out (if your site needs some work, you can check these descriptions of possible errors).

Address any indexing errors

Google Search Console is an invaluable tool for SEOs to use, particularly for technical SEO.

That’s because it gives you exclusive insights into how Google views your website. You’ll be able to tell if Google is able to index your website, as well as if there are any errors.

The Page Indexing report gives you a full overview of all the URLs Google has indexed and the ones it does not (including reasons why).

Not only that, but it’ll even let you know if there are any issues with pages that are already indexed but could use some optimization to perform better on the SERPs.

For pages that have indexing errors, clicking on the lens icon on each web page will let you know specifics about what went wrong.

Here’s a look at some of the most common indexing errors:

- Submitted URL marked ‘noindex.’ This occurs whenever you’ve marked a page with a noindex tag, meaning you don’t want it to appear in Google’s index. If this appears on a page that you want to appear in the SERPs, you need to remove the noindex tag.

- Soft 404. A soft 404 occurs whenever a page has a 200 OK response, but Google still isn’t able to find the content. This tends to happen when content moves, and you can solve it by using a 301 redirect to a new address.

- Blocked due to an unauthorized request. If you see this error, it means that you’ve blocked search bots from crawling the page – so you’ll need to change your settings to allow them access.

- Indexed without content. This happens whenever a page is indexed but has too little content to appear in the SERPs. The fix is to simply add more content to the page.

#3: Security Optimizations

You need to make sure that your website is secure, especially if you use contact forms to collect users’ personal information.

If you run an eCommerce store, then security is even more important – as you want your customers to know that their financial information stays encrypted.

Use HTTPS

HTTPS is an absolute must for modern websites, as it means you have an SSL certificate that encrypts all data transmitted to and from your site.

This is doubly important if you sell goods on your website, as you’ll want to encrypt your customer’s sensitive financial information.

If your website uses HTTPS, you’ll see it in your domain (i.e., https://www.yourwebsite.com). Also, you’ll see a tiny padlock next to your website, which lets users know that your website is secure.

To make the switch, you’ll need to buy an SSL certificate and install it on your web hosting account.

#4: Page Speed Optimizations

Lastly, your website needs lightning-fast load times to rank high on search engine results pages.

That’s especially true for Google, as their Core Web Vitals test will see if your web speed is up to snuff.

Here’s how to improve your website’s loading speed.

Consider using a CDN (content distribution network)

CDNs are great for loading speed because they store multiple copies of your website in different geographic locations.

From there, they choose the version of your site that’s closest to a user’s location – which causes the website to load extremely quickly.

-

Don’t use too many plugins

Does your website have a ton of outdated plugins? If so, they could be slowing down your site speed, and they can also be a security risk.

-

Compress images and videos

Videos and images take up a ton of bandwidth, especially if they’re high resolution. To minimize their impact on your site speed, use a compressor on all your images, videos, CSS, and Javascript files.

Final Thoughts: Technical SEO Checklist

Technical SEO can make or break your performance on search engines, so it’s not something to overlook.

As long as you carefully cross off each item on this checklist, you’ll enjoy airtight technical SEO, allowing your on-page efforts to truly shine.

Do you need help with technical SEO for your website?

Then don’t wait to check out HOTH X, our managed SEO services. Our digital marketing experts know what it takes to conduct winning SEO campaigns, so don’t wait to get in touch today.

Leave a comment